by Cliff Landis, Christine Wiseman, Allyson F. Smith, Matthew Stephens

Cultural Heritage Emergency Response in Georgia

As the largest U.S. state east of the Mississippi River, with 159 counties, Georgia has extensive and diverse natural, cultural and historic (NCH) sites that preserve and document the unique history and culture of the state. These NCH sites are critical to cultural tourism, and include libraries, museums, archives, historical societies, historic sites, state parks, national parks and performing arts organizations. In recent years a spate of natural disasters — including Hurricanes Matthew, Irma, Michael, Sally and Laura, as well as regional flooding, and a very active tornado season in southwest and northwest Georgia — has resulted in damage to historic structures, important documentary and artifact collections and valued cultural resources throughout the state. The 2020 hurricane season was the most active Atlantic season ever with a record 30 named storms, 13 hurricanes and 6 major hurricanes [1]. Furthermore, responses to these events were hampered due to the COVID-19 pandemic. These natural disasters have also significantly impacted, and sometimes closed, community organizations important to citizens coping with disasters, such as public libraries and local archives. A rapid response is critical to successfully salvaging NCH organizations and collections that are important to our citizens and economy.

Georgia, however, did not have a means for quickly identifying NCH sites in areas impacted by disasters, thus impeding response. Having an up-to-date and comprehensive inventory of existing NCH sites is essential to preventing loss of important NCH resources and limiting the financial impact of a disaster. Only with accurate geographic locations of these resources can emergency responders and cultural heritage managers work together to expedite the recovery process.

Today, Georgia has a robust community of organizations that cooperate and collaborate to mitigate damage to cultural heritage collections in the event of a disaster, but this was not always the case. The destruction of cultural heritage collections and organizations inflicted by Hurricanes Katrina and Rita in 2005 served as a wakeup call in the cultural heritage community across the southeast and the nation. In the same year, a groundbreaking nationwide survey, Heritage Health Index, was published by Heritage Preservation and the Institute for Museum and Library Services. The Heritage Health Index was the first survey to assess the condition and needs of U.S. collections held in the public trust. Responses related to disaster planning found that an alarming “80% of collecting institutions did not have an emergency plan or staff trained to carry it out.” [2] Although cultural organizations within Georgia were not severely impacted by Hurricane Katrina, the destructive nature of the storm coupled with the Heritage Health Index galvanized the community to begin collaborative efforts to increase awareness about the importance of disaster planning in the cultural heritage ecosystem.

Modeling the Federal planning structure, Georgia’s emergency planning is organized into 14 Emergency Support Functions (ESF). NCH organizations are included in the State of Georgia Emergency Operations Plan ESF-11 Annex [3] which is coordinated by the Department of Agriculture and the Department of Natural Resources. Under these primary agencies, support agencies and organizations are included in the plan, including the Georgia Archives, Georgia Historical Society, Heritage Emergency Response Alliance (HERA), and Savannah Heritage Emergency Response (SHER). These NCH organizations were added during the 2015 update which was the result of years of building relationships with state agencies and first responders. Since then, the NCH community continues to cultivate relationships within the primary and support agencies of ESF-11 to ensure that cultural collections are considered in disaster planning and recovery, in addition to the goals of saving lives, protecting property and restoring essential services.

Pilot and Project Design

Linked open data is “a group of technologies and standards that enables 1) writing factual statements in a machine-readable format, 2) linking those factual statements to one another, and 3) publishing those statements on the web with an open license for anyone to access.”[4] Wikidata (https://www.wikidata.org/) is the linked open data knowledge graph (database of facts) produced by the Wikimedia Foundation, alongside sister projects like Wikipedia.

In 2018, the Atlanta University Center Robert W. Woodruff Library conducted a pilot project to test the feasibility of updating an existing but out-of-date NCH data set. The Georgia Historical Records Advisory Council’s Historical and Cultural Organizations Directory [5] was web-scraped and placed into a table. The data was enhanced using free web tools like GeoCod.io (https://www.geocod.io/) and MapLarge’s Geocoder (https://geocoder.maplarge.com/Geocoder) to include geospatial coordinates and county information. A subset of ten records was reconciled against Wikidata using OpenRefine, a free data cleaning tool. This subset was then uploaded with OpenRefine to Wikidata [6], enabling us to query the data and display the results using the Wikidata Query Service. Two example displays were created and published online [7]. These examples showed 1) an auto-generated list of contact information for NCHs in an area (City/County/State), and 2) an auto-generated map of all NCHs in a geographic area.

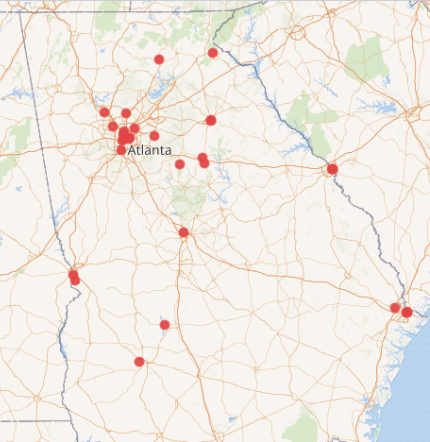

Figure 1. Wikidata in 2018: Galleries, Libraries, Archives, and Museums (GLAM) in Georgia with coordinate information: 40 institutions.

Building upon the growing strength of Georgia’s NCH emergency response community network, we used this pilot example to present the idea of an openly editable directory to state-level NCH organizations. The idea was well received, with most organizations expressing a willingness to serve as partner organizations to provide source datasets and meet bimonthly to provide feedback [8]. Some organizations committed additional resources for hosting the website and performing annual updates. These additional resource commitments ensured that the project would be sustainable and well-maintained over time, rather than a one-off project.

After gathering support for the idea, we designed the project to be as open as possible. Our design principles included:

- Flexible structure – Wikidata is able to represent many relationships in repeatable fields; records are easily enhanced with additional data

- Decentralized updates – Simple edits can be done manually by anyone; no need for an institutional or individual “owner” to grant access to the data for regular updates, thus removing bottlenecks

- Open license – Facts represented in Wikidata are public domain

- Sourced information – Reference links would be included for each statement, for verification and to provide direction for future updates

- Broad access and free implementation – The project will be completed using free software

- Model for other states – By documenting and presenting on the processes used, the project would provide other states with a model that can be easily reproduced, adapted, and improved

- Graduate student paid internship – The project would provide a graduate student in Library and Information Science, Archival Studies or a related field with practical experience working with linked open data.

In June 2019, the Atlanta University Center Robert W. Woodruff Library received a LYRASIS Catalyst Fund grant to support the project.

Data Workflow

After the successful pilot workflow test, we scaled-up the workflow into a seven-step process:

- Receive dataset – use Python scripts to scrape websites, download available datasets, or request datasets from project partners

- Format to spreadsheet template – copy relevant data over to the dataset template in CSV (comma separated value) format

- Index and remove duplicate entries – search each organization name in Wikidata to see if a record exists and to see if we’ve already created/edited the record in a previous upload. If so, delete the duplicate entry to prevent performing duplicate enrichment work

- Gather, verify, enrich, and create references for data – perform web, phone, and email research to verify the existence and directory information for the organization. Enrich with county and geocoordinate location information. Use the Internet Archive Wayback Machine to create snapshots for references

- Reconcile in OpenRefine – create a data dictionary to identify and prioritize which data points are most valuable for disaster recovery, and to match them to Wikidata’s data model [9]

- Upload dataset to Wikidata – using OpenRefine, upload the revised and referenced records in the dataset to Wikidata

- Perform quality control on ingests – review each record in Wikidata and make any post-upload edits necessary. Examples include verifying geocoordinate locations, removing duplicate field entries, selecting a “preferred rank” value for multi-value fields, and adding parent/subsidiary organization relationships.

To help other organizations who may want to adapt or reuse this workflow, we’ve developed a Workflow Manual that describes this step-by-step process in greater depth [10].

Figure 2. Mapping NCH Organizations’ directory information to Wikidata’s schema.

Website application

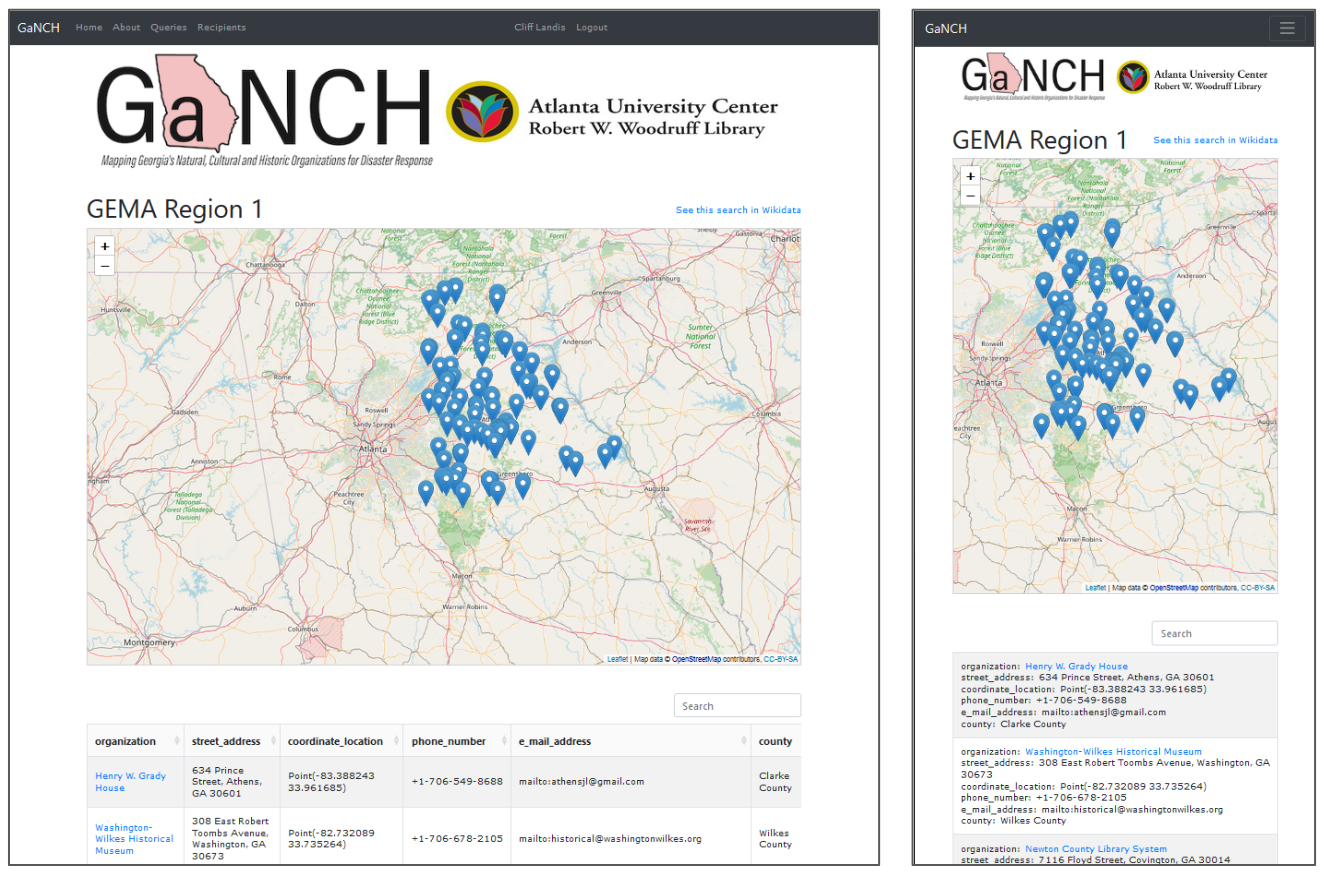

While datasets were being processed through the data workflow, we developed a mobile-friendly website application that uses prepared linked data queries to display the results of all our data. At its heart the GaNCH application is a web proxy, fetching and re-presenting information from Wikidata, caching it locally. Wikidata provides the Wikidata Query Service, which lets users construct requests in the SPARQL query language. Our site facilitates the housing of queries for NCHs at various levels in Georgia and presents the resulting datasets in both tabular and cartographic formats.

Figure 3. Desktop and Mobile views of GaNCH search results, showing both cartographic and tabular displays.

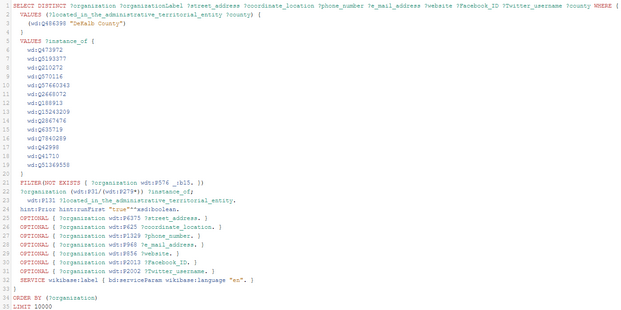

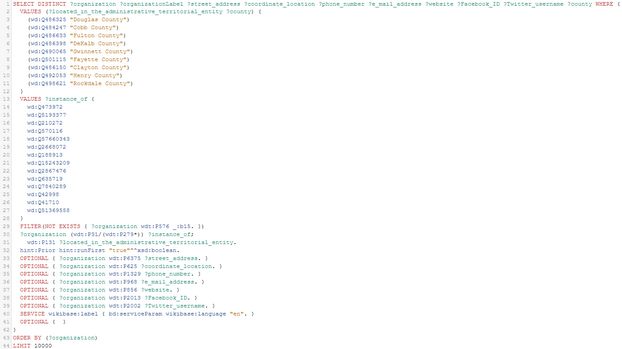

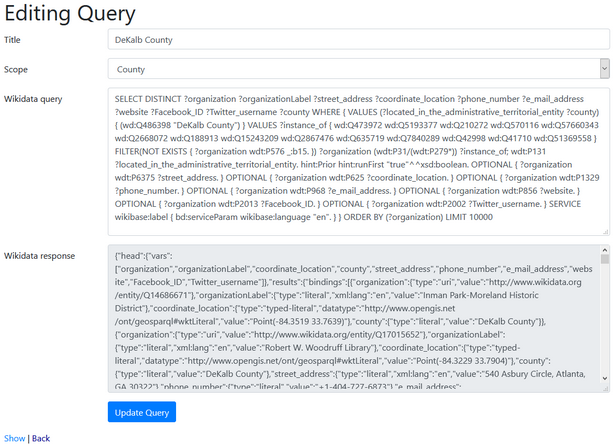

Sustaining a website like this requires user accounts and related authentication, but at a very simple level. Authenticated accounts are restricted to project staff, who can create, read, update, and delete the SPARQL queries and view the responses in their raw form. This also provides us with an audit trail for query edits. However, unauthenticated public users are able to view a Wikidata result set for a given Georgia county or GEMA region, or the state as a whole, as both a table and an interactive map. Public users can also follow instructions on the site to export the tabular data directly from Wikidata. Secondary requirements included some instructional pages for public visitors to the site, responsive design to facilitate use on mobile devices, and some scripted tasks to update the 159 Georgia county queries in a single batch, to relieve project members of tedious data entry.

Figure 4. The “DeKalb County” query in the Wikidata Query Service, available at https://w.wiki/tzi .

Figure 5. The “GEMA Region 7” query in the Wikidata Query Service, showing the combination of several counties into a single result set, available at https://w.wiki/tzk.

Figure 6. Editing the “DeKalb County” query on the back-end of the GaNCH website, showing both the full SPARQL query and the Wikidata response.

The site interfaces with Wikidata’s SPARQL API and stores the query response as text/json, parsing and transforming the responses as needed for presentation to the user. One obvious need was to combine the organization identifier and its human-readable label into a single column (for creating a hyperlink.) Thus, JSON from wikidata in the shape of

{

"organization":

{

"type":"uri",

"value":"http://www.wikidata.org/entity/Q69766025"

},

"organizationLabel":

{

"type":"literal",

"xml:lang":"en",

"value":"Alma-Bacon County Public Library"

}

}

Is transformed into an object like this for use in a responsive Bootstrap table:

{

"type"=>"uri",

"value"=>"http://www.wikidata.org/entity/Q69766025",

"as_link"=>"<a data-value=\"Alma-Bacon County Public Library\" target=\"_new\" href=\"http://www.wikidata.org/entity/Q69766025\">Alma-Bacon County Public Library</a>",

"search_data"=>"Alma-Bacon County Public Library"

}

In the database, both query and response are stored as text, and when read, the response JSON is parsed into an object that can be traversed. The Wikidata response includes the response parameters as a header row, which will be familiar to most spreadsheet users. The header serves as the source of the table structure, though as mentioned we did find a need to group related columns such as ‘organization’ and ‘organizationLabel’ into one to present a readable table. Keeping presentational logic out of the data storage layer of the application was a standard separation of concerns, the idea being that what is stored as “response” actually matches the response from the data source. A further goal was to allow admin users to edit their queries (checking their work against the Wikidata Query Service, which offers an online query editor), and this arrangement allows for easy troubleshooting of purely data errors, as opposed to errors in the applications rendering of the page.

We did similar on-the-fly cleanup of some fields to adjust Wikidata-formatted values to work in the map, as latitude and longitude needed to be split into their own parameters. In addition, if Wikidata records included an email for a given institution, the site must be capable of sending occasional emails to that organization, to encourage them to keep their public records up-to-date. To that end, the response JSON was scanned for such emails, which are compiled into a separate list in the database, along with their related institutional identifiers.

Some other requirements were shaped by our partnership with GALILEO, Georgia’s virtual library. This consortium generously offered to host our project. Their expertise with Ruby on Rails made that framework a logical choice in which to write our website code. In addition, GALILEO makes use of the GitLab DevOps suite of tools, and this was an integral part of our development and release process.

Rails made sense to us for a number of reasons, most notably because Ruby offers a reliable SPARQL library, which meant that queries could be easily managed within Rails. Initial creation and testing of those queries, we found, was best done on Wikidata’s own query editor. GitLab also offered many desirable features, such as continuous integration testing, automated deployment, and issue tracking.

With a limited budget for developer time, it was essential to make good use of open source tools and community knowledge. We chose not to use Wikidata’s own Maps as an iframe as the Wikimedia Foundation Maps Terms of Use suggests reliance on them might be counterproductive (“please respect our limited services and resources. We reserve the right to discontinue.… If you use the service too heavily, it could affect the service’s stability for others or degrade our quality of service.”).[11] We instead chose OpenStreetmap as the source of our geographic data, and together with the Leaflet Javascript library for interactive maps, it offered us the ability to plot NCHs on a map with minimal effort, while also ensuring that the maps would work if Wikidata was unavailable. Likewise, the use of Bootstrap and the Bootstrap-table UI frameworks made the creation of sortable, searchable tables straightforward. It is our expectation that these will also be easy to maintain in the long term, due to their widespread use. The site includes a job scheduler (we used rufus-scheduler, a popular Ruby library), which runs a nightly update, querying Wikidata. If no response is received, the site does not update its local data, which means that, for these items at least, our site provides a backup in case Wikidata is itself disrupted or unavailable.

Points of unexpected effort included: Rails 6 setup, as this new version of Rails does significantly change project setup and structure (for the better.) We also found a need to create numerous functions to transform/clean up various data items from within the large and heterogeneous JSON objects returned by Wikidata. These are available on the GaNCH Website’s GitHub repository [12].

Several aspects of system administration/environment setup (email handling, security/server configurations) required significant time. While deployment went smoothly, there was the usual time and effort getting team members up to speed on the desired processes, as well as remotely troubleshooting issues as they arose. In contrast, routine preparation in beginning a Rails 6 project for the first time led to many useful time savers. Much of the navigation UI and authentication work was expedited by implementing ideas found in Chris Slade’s excellent YouTube tutorial on Rails 6 development [13]. This allowed us to overcome a growing challenge in Rails development, the reliance on outdated information and design patterns. We anticipate that this will improve the site’s sustainability.

GitLab’s stringent deployment criteria required regular upgrading of components as vulnerabilities/exploits were discovered by the community. This means that the site has seen regular maintenance and is staying up-to-date with minimal effort. GitLab’s issue tracking made work that was, by necessity, 100% remote much smoother than it might have been.

To ensure that the final website would meet cultural heritage emergency responders’ needs, we facilitated a focus group with emergency response coordinators at GEMA, HERA, and SHER in April 2020. The feedback from this focus group session was instrumental, as it resulted in the inclusion of the eight GEMA Regions, which are used to coordinate emergency response at the state level. We also adjusted the website to include instructions on how to export search results via the Wikidata Query Service; this enables cultural heritage emergency responders to integrate the results into the state-level WebEOC system.

Addressing challenges

Overall the project worked well. The most frequent concern that people have raised about using Wikidata for this project is the risk of vandalism, but over the last two years we’ve only seen a handful of minor instances of unhelpful edits, which were easy to revert. This is possible because records that have been created or edited by a project staff member are automatically added to that member’s Wikidata Watchlist, allowing them to monitor ongoing changes. Weekly review of the Watchlist allows project staff members to quickly identify any unhelpful edits and revert them. It was a pleasant surprise to see that the most commonly raised concern did not present a challenge for the project.

However, we did experience a few unexpected challenges. One problem we encountered was outdated information persisting in the initial datasets we received; in some cases NCH organizations had been dissolved for over a decade, yet still persisted in government and professional organization directories. The process to verify dissolved dates included reaching out directly to the last available contact, reviewing newspaper articles, and utilizing the Trip Advisor and the Georgia Corporations Division Business Search websites. Whenever possible, we included these dissolved organizations in our uploads, and included the dissolution date with a reference to show evidence that the organization no longer exists. This not only provides public documentation of the organization’s closure, but it also allows us to exclude these closed organizations from our search results to prevent cultural heritage emergency responders from wasting time during a disaster.

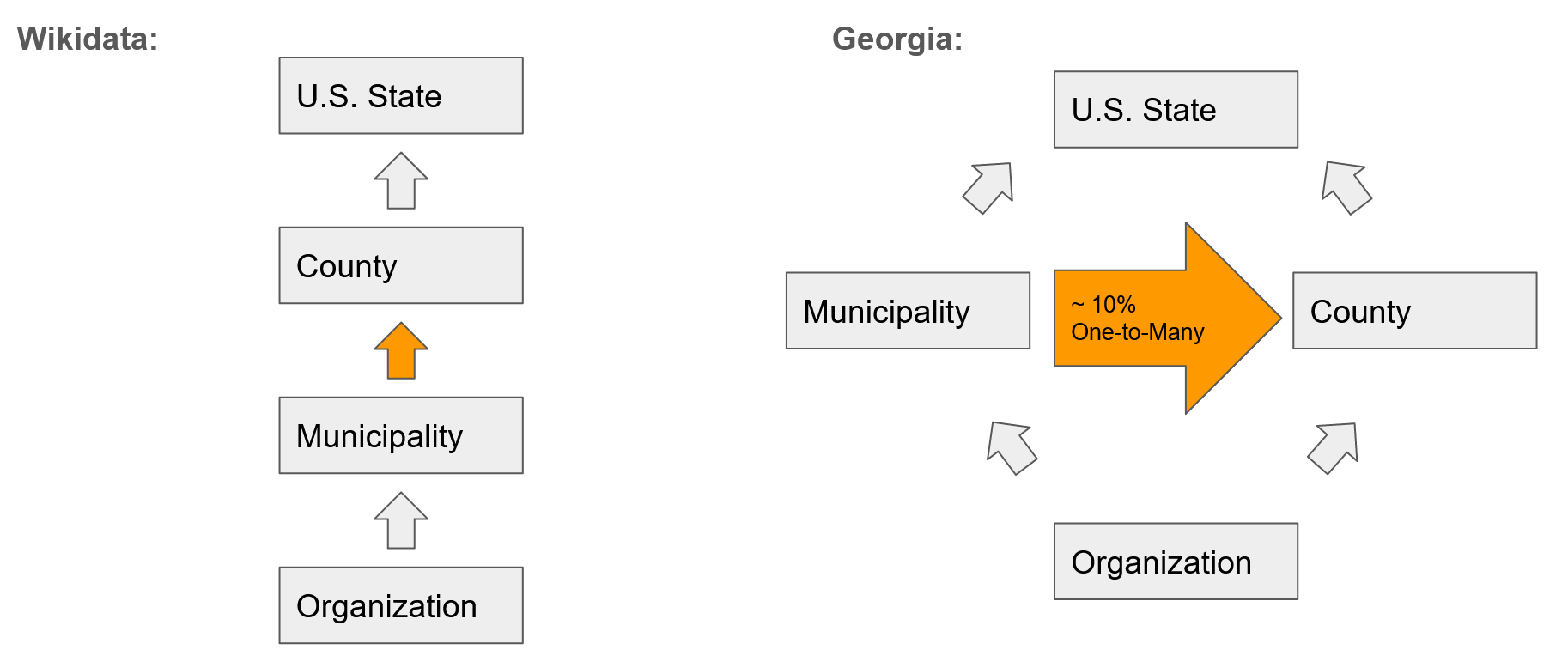

Another issue we discovered was the challenge of trying to map real-world messiness to the much cleaner data model of Wikidata. The problem was not only trying to map it, but trying to reach consensus with the Wikidata community on how it should be handled. In Wikidata, the P131 property is the metadata field for administrative territorial entities in Wikidata [14]. The scope of this P131 property is broad and can cover municipalities, counties, and states. In the Wikidata documentation for P131, it recommends that you only list the single most local administrative territorial entity, since the field is supposed to be both transitive and hierarchical, and cascade upwards as seen in Figure 7 on the left.

Figure 7. Diagram showing the conflict of the transitive property of P131 between Wikidata and Georgia.

But in Georgia, the borders of municipalities and counties were drawn independently, and about 10% of the municipalities in Georgia exist in more than one county; as such, they are not transitive, as seen in Figure 7 on the right. This means that our project cannot rely on Wikidata’s cascading hierarchy to be correct when it comes to searching for organizations by county. We reached out to the Wikidata community to try and find a solution to this challenge. After several proposals and over a month of discussion on four separate Wikidata pages, no consensus was reached. As a result, we decided to explicitly declare municipality, county, and state, all in the P131 field, with the hope that consensus on a solution can be reached in the future. In the meantime, our queries are functioning well. We reason it is better to have too much well-sourced information, rather than too little.

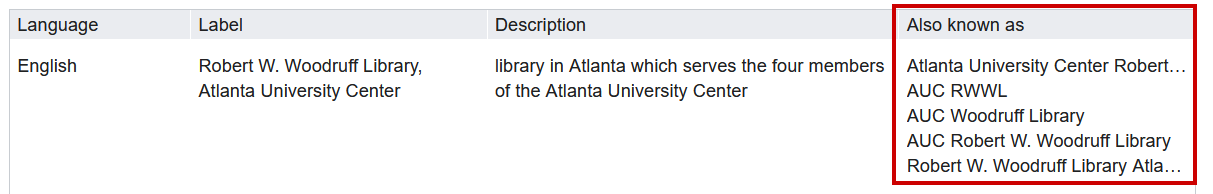

We also encountered a workflow challenge with NCH organizations’ name variations. We knew in advance that many of the organizations would appear in multiple source datasets, therefore we created an index file to assign accession numbers for each organization. As we prepared new datasets, we compared the organization names against the index to prevent duplication of our work. We also knew that organizations’ names would vary between datasets, so we planned to check each dataset against the index using the Microsoft Excel Fuzzy Lookup Add-on to identify similar/identical organization names. Unfortunately, this method was insufficient at identifying duplicate records; therefore we changed our workflow to check the names of organizations manually in Wikidata before enriching the data. As we discovered new name variations, we added them to Wikidata as Aliases (alternative names). This improved our results by reducing duplication of work, however, a few duplicate records of organizations were present on each dataset; these duplicates had to be merged manually during quality control in Wikidata. A bonus to this process is that we generated a clear list of how many organizational records were edited (via the index), and that Wikidata now includes a comprehensive list of all the name variations we encountered for each organization.

Figure 8. Recording Labels (name variations) in Wikidata.

Originally, we planned to use free, online geocoding tools to quickly obtain geocoordinate locations for the NCH organizations. Unfortunately, since these tools rely solely on the address of the organization, they can often yield incorrect results. After a disaster, wayfinding markers, or even whole buildings can be destroyed; it was important for us to identify the physical locations of these NCH organizations, so the mailing address or nearest intersection was often insufficient. As a result, we shifted to manually locating each NCH organization’s geolocation coordinates via Google Maps, which in some cases required a fair amount of research. For example, most of the technical colleges in Georgia have multiple campuses, each with their own libraries. Many of these libraries do not have their own webpages to reference, they often exist in shared facilities (e.g. Academic Building C), and they are not distinctly identified in Google Maps. By looking for online campus maps and cross-referencing with Google Maps, we were able to identify the physical location of each library.

Lastly, an unfortunate component of this project was the emotional toll it took on us. Georgia has a diverse and challenging history, reflected in its organizations and their records: the Confederacy, American slavery, Jim Crow, Civil Rights, Indigenous People and sacred land, BIPOC, and the LGBTQIA+ community are all part of the state’s history. There are organizations in Georgia who celebrate those that defended the practice of slavery, and there are also organizations who celebrate those that sought to abolish it. This project was completed at the same time as international protests were taking place against systemic and police violence committed against Black Americans. Seeing this same battle for justice play out on American streets while we worked on these records was uncomfortable, to say the least. Yet, no matter how we may personally feel about parts of Georgia’s history, we endeavored to record it all fairly and equally. No record should be erased because we are uncomfortable. Our discomfort should push us into discourse about what each record means to the state of Georgia then and now.

To help other organizations who may adapt or replicate this project, we have documented the challenges that we encountered and how we addressed them [15].

Sustainability

We recognized early on that this project would need a state-level institutional home, therefore we created a sustainability plan with our partners for inclusion into the project. GALILEO, Georgia’s virtual library, graciously provided website hosting for the GaNCH website. The Georgia Public Library Service’s Archival Services and Digital Initiatives Office and GALILEO both volunteered to provide ongoing staff support for dataset maintenance via annual updates.

To help ensure long-term sustainability of the project, we built a semi-automated emailer on the back-end of the GaNCH website to send out reminder emails to NCH organizations. Since we gathered many organizations’ contact email addresses during the enrichment stage of the data workflow, update reminder emails can be sent annually to confirm accuracy of, or obtain updates to, organizational information. If inaccurate, the NCH organizations can reply to provide updates. If the email bounces, staff can check to see if the organization still exists.

If we were to repeat this project, we recommend asking for additional funding in two areas: to pay for promotional activities and to pay for an additional graduate student assistant. We did not originally allocate any funds for travel costs to spread the word about the project, therefore our opportunities in this area were limited. However, in the wake of COVID-19, many conferences transitioned to a completely virtual format and waived their registration fees for presenters. This provided us with additional opportunities to present on GaNCH at the state and national level. Although we were able to accomplish a great deal, we had to pull in additional staff members Jessica Leming and Alex Dade from the Woodruff Library’s Digital Services Department to assist with record enhancements and quality control reviews to meet deadlines, for which we are forever grateful. However, with additional funding for a second graduate student assistant, we could have expanded the project to include additional NCH organizations (such as county Clerk of Court offices). We also could have further enriched the records for the organizations that were included; with limited time, we had to cut back on recording social media accounts, inception dates, and parent organization/subsidiary organization relationships part-way through the project to ensure we were able to capture core data for more organizations.

Impact & Future Possibilities

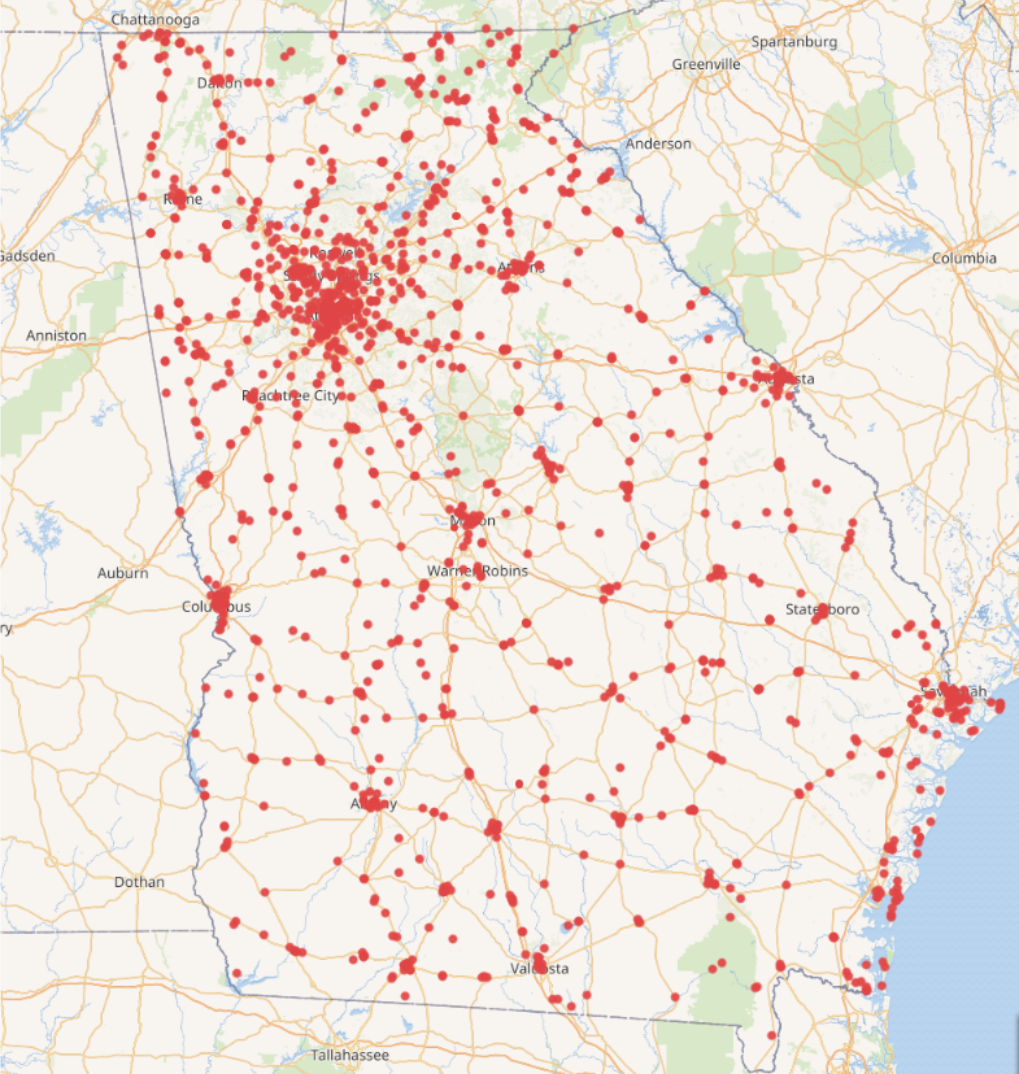

At the beginning of this project, there was no easy way for cultural heritage emergency responders to reach out to NCH organizations before or after a disaster. The data available was scattered across the internet in disparate databases and tables, and was rarely up-to-date. What started in 2018 as 40 GLAM institutions mapped in Wikidata has now grown to 1,916 NCH organizations indexed as of August 2020.

Table 1. GaNCH Project Impact Overview.

| Source Datasets | 13 |

| Properties | 19 |

| Total NCH organizations (de-duplicated) | 1,916 |

| Total Wikidata edits (all project staff) | 15,501 |

We retrieved, verified, updated, enriched, and uploaded thirteen datasets gathered from our partner organizations, each with as few as 15 to as many as 556 records. Additionally, we have broadened the scope of our search queries to meet the needs of cultural heritage emergency responders. For example, our search queries now include historic districts which, although they don’t have contact information, are important for GEMA fly-overs to assess damage of historic properties not associated with cultural heritage organizations. As a result, our largest search query now includes 1,960 results.

Figure 9. Wikidata in 2020: NCH organizations in Georgia with coordinate information: 1,960 institutions.

However, the first real test of the web application’s effectiveness came in September 2020 as Hurricane Sally approached the southeastern U.S. In anticipation of heavy rainfall and flooding, members of HERA sent out emails to 330 NCH organizations in GEMA Regions 1, 3, 4 and 7. Thankfully, none of the emailed organizations requested emergency assistance following the storms, but HERA did receive gratitude from organizations for reaching out. From the email blast, we experienced a 9% bounce rate where emails could not be delivered. Not all organizations publicly provide email addresses, so we knew that we were not reaching everyone in these regions, but broadcast emails are a good way to get the word out quickly that emergency assistance is available. For more acute disasters such as a tornado, emergency responders can reach out to organizations on a county-by-county basis using the table of all available contact methods, including email, phone, Facebook, Twitter, and website forms. We can now follow up with the organizations whose emails bounced to see if the organizations are still active, and if so, which email address they can provide (if any), for emergency contacts.

A pleasant surprise in working on the project was seeing how Wikidata was being used by others to store and discover additional information about the NCH organizations. During the project, a Wikidata user uploaded visitor counts for all Georgia Public Library System libraries from 2015-2017. Since Wikidata is an open system, additional information can be added to these records, such as budget changes, collection statistics, branch openings and closings, and other facts, all stored as linked open data. Likewise, the data that we entered for disaster preparedness and recovery is now freely available to be repurposed for tourism, planning infrastructure, performing historical analysis, or other topics we have yet to imagine.

As such, we hope our website will not only serve as a one-stop location for Georgia’s cultural heritage emergency responders to identify NCH organizations at risk but will also serve as a tangible introduction to linked open data for cultural heritage workers of all kinds.

In order to make this project a model for other states, we have made all project documentation and data freely available via GitHub at https://github.com/AUC-Woodruff-Library/GaNCH-data and https://github.com/AUC-Woodruff-Library/GaNCH-website. The GaNCH website is available at: https://ganch.auctr.edu/.

Notes

[1] National Oceanic and Atmospheric Administration. November 24, 2020. Record-breaking Atlantic hurricane season draws to an end. [Internet]. Available from: https://web.archive.org/web/20201201212728/https://www.noaa.gov/media-release/record-breaking-atlantic-hurricane-season-draws-to-end

[2] Heritage Preservation. 2005. A Public Trust at Risk: The Heritage Health Index Report on the State of America’s Collections. [Internet]. Washington (DC): Heritage Preservation. Available from: https://web.archive.org/web/20200820135617/https://www.imls.gov/sites/default/files/publications/documents/hhifull_0.pdf

[3] Planning [Internet] [accessed 12/2/2020] Atlanta (GA): Georgia Emergency Management and Homeland Security Agency. Available from: https://web.archive.org/web/20201023073629/https://gema.georgia.gov/what-we-do/planning

[4] Landis, Cliff. 2019. “Linked Open Data in Libraries” in K. J. Varnum (Ed.), New top technologies every librarian needs to know (pp. 3-15). Chicago: ALA Neal-Schuman. http://hdl.handle.net/20.500.12322/auc.rwwlpub:0029

[5] Historical and Cultural Organizations Directory. Georgia Historical Records and Advisory Council. https://web.archive.org/web/20200804170808/https://georgiaarchives.org/ghrac/directory

[6] Edit group by Clifflandis: United States (96014bf). EditGroups. https://web.archive.org/web/20200312135440/https://tools.wmflabs.org/editgroups/b/OR/96014bf/

[7] Wikidata iFrame test. CliffLandis.net. https://web.archive.org/web/20200312135539/http://clifflandis.net/WikiData_GA-CHO-DR_test.html

[8] GaNCH Project Partners List. GitHub. https://web.archive.org/web/20201208160419/https://github.com/AUC-Woodruff-Library/GaNCH-data/blob/master/docs/project_partners.md

[9] GaNCH Data Dictionary. GitHub. https://web.archive.org/web/20201208160608/https://github.com/AUC-Woodruff-Library/GaNCH-data/blob/master/data/data_dictionary.md

[10] GaNCH Workflow Manual. GitHub. https://web.archive.org/web/20201208160839/https://github.com/AUC-Woodruff-Library/GaNCH-data/blob/master/docs/workflow.md

[11] Wikimedia Foundation – Maps Terms of Use. https://foundation.wikimedia.org/wiki/Maps_Terms_of_Use

[12] GaNCH Website. GitHub. https://web.archive.org/web/20201208181502/https://github.com/AUC-Woodruff-Library/GaNCH-website

[13] Beginner Rails 6 Tutorial Series. YouTube. https://www.youtube.com/playlist?list=PLmz7pZjxnS1VlA-k5qpji6aYDYys0MbIA

[14] located in the administrative territorial entity (P131). Wikidata. https://web.archive.org/web/20201206193449/https://www.wikidata.org/wiki/Property:P131

[15] GaNCH Addressing Challenges. https://web.archive.org/web/20201208161050/https://github.com/AUC-Woodruff-Library/GaNCH-data/blob/master/docs/challenges.md

About the Author

Cliff Landis is Digital Initiatives Librarian at the Atlanta University Center Robert W. Woodruff Library. His research interests include linked open data, archival technologies, digitization, metadata, and the coevolution of humanity and technology.

Christine Wiseman is Assistant Director of Digital Services at the Atlanta University Center Robert W. Woodruff Library. Her research interests include cultural heritage emergency preparedness and archival and digital preservation.

Allyson F. Smith is Graduate Assistant at the Atlanta University Center Robert W. Woodruff Library.

Matthew Stephens is a web developer who has collaborated with the Atlanta University Center Robert W. Woodruff Library since 2015.

Subscribe to comments: For this article | For all articles

Leave a Reply